How generative AI evolved and where it’s heading next

I’m willing to bet you’re already very familiar with ChatGPT and DALL-E. Who isn’t? These popular GenAI tools have shaken up our digital society like nothing before and turned scores of internet users into overnight digital creators.

In my work covering AI developments at WeAreBrain, I’ve watched Generative AI transform from experimental technology into essential infrastructure. The pace of change has been extraordinary: what seemed cutting-edge in 2023 is now standard practice, and capabilities we thought were years away have arrived far sooner than expected.

But what is GenAI?

Generative AI, also known as GenAI, is a subset of artificial intelligence that is reshaping our collaboration with technology by the minute. Rather than simply recognising and following predetermined patterns and rules in existing data, GenAI possesses the unique ability to create.

Without explicit instructions, GenAI can generate new content such as images, artificial music, videos, and text by learning from datasets—transforming AI from executor to creator. Now that this branch of AI is a commodity, it has significant potential to enhance creativity across various industries and even automate it.

Let me take you through the fascinating history of Generative AI, its pivotal developments, and its trajectory to show just how much impact this technology is having on humanity.

The birth of Generative AI

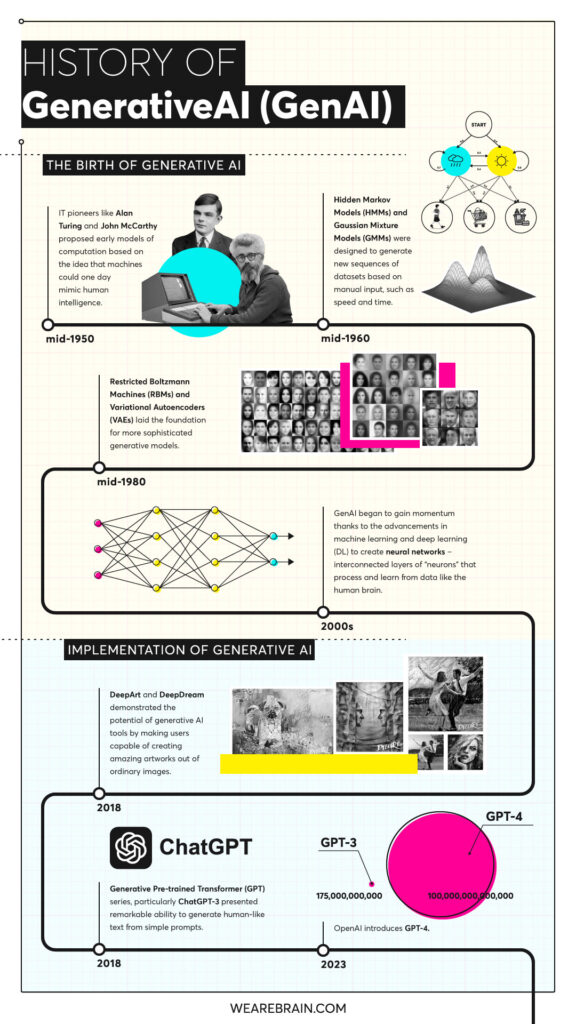

Leading AI research traces the genesis of Generative AI back to the mid-1950s when the concepts of artificial intelligence and machine learning were beginning to take shape.

IT pioneers like Alan Turing and John McCarthy played a pivotal role in laying the foundation for GenAI when they proposed early models of computation based on the idea that machines could one day mimic human intelligence.

Early versions of GenAI were the Hidden Markov Models and Gaussian Mixture Models. These statistical models were designed to generate new sequences of datasets based on manual input, such as speed and time.

Further developments resulted in the creation of models like Restricted Boltzmann Machines and Variational Autoencoders which laid the foundation for more sophisticated generative models.

The evolution of Generative AI

GenAI began gaining real momentum in the 2000s thanks to advancements in machine learning and deep learning to create neural networks, interconnected layers of “neurons” that process and learn from data like the human brain.

Trained to recognise patterns in datasets, neural networks are able to make predictions and decisions without being explicitly programmed to do so.

The creative power of GenAI emerged thanks to a specific type of neural network developed in 2014 by Ian Goodfellow and colleagues called a Generative Adversarial Network. GANs revolutionised image generation by combining two neural networks—a generator and a discriminator, which compete to improve the quality of the generated data.

Added to this, further advancements such as Transformers (using natural language processing), Variational Autoencoders, and Recurrent Neural Networks demonstrated AI’s ability to generate new, creative content.

Key milestones in GenAI history

| Era | Development | Impact |

|---|---|---|

| 1950s | Turing & McCarthy foundations | Established AI as possible |

| 2000s | Neural networks & deep learning | Enabled pattern recognition |

| 2014 | GANs (Goodfellow) | Revolutionary image generation |

| 2017 | Transformers architecture | Breakthrough in language models |

| 2022 | ChatGPT launch | Mainstream GenAI adoption |

| 2024 | GPT-4o, Claude 3.5, Gemini 1.5 | Multimodal capabilities arrive |

| 2025 | Video generation (Sora) | Text-to-video becomes viable |

| 2026 | Agentic AI systems | Autonomous task completion |

Significant implementations of GenAI

Generative AI has evolved from experimental tools to production systems demonstrating powerful capabilities across various domains.

Early GenAI image tools such as DeepArt and DeepDream demonstrated the potential by making users capable of creating artworks out of ordinary images. These laid the groundwork for what followed.

The real breakthrough came with the Generative Pre-trained Transformer series. ChatGPT-3 took the world by storm in late 2022 for its remarkable ability to generate human-like text from simple prompts, igniting global imagination about AI’s creative potential.

OpenAI, co-founded by Elon Musk, has played a major role in advancing GenAI capabilities and adoption. But the landscape has evolved dramatically since then.

The current model landscape (2024-2026)

Based on what I’ve seen following these developments, the GenAI field has become intensely competitive. Enterprise spending reached $37 billion in 2025, up from $11.5 billion in 2024—a 3.2x increase. Stanford’s research shows that 78% of organisations now use AI, up from 55% the year before.

The major players have released increasingly capable models:

OpenAI’s evolution: GPT-4 (2023) → GPT-4o (May 2024) → GPT-4.5 (February 2025) → GPT-5 (expected 2025). The company also introduced specialised reasoning models (o1, o3) that perform step-by-step logical problem-solving, particularly effective for complex coding and mathematical tasks.

Anthropic’s breakthrough: Claude 3 Opus (March 2024) → Claude 3.5 Sonnet (June 2024) → Claude 4 Opus and Sonnet (May 2025). Claude 4 Opus scores 72.5% on coding benchmarks and can perform autonomous programming tasks for hours. Claude has become particularly strong for long-context analysis and code generation.

Google’s advancement: Gemini 1.0 (early 2024) → Gemini 1.5 (with 1 million-token context) → Gemini 2.5 → Gemini 3 Pro (November 2025). Google’s models now lead in multimodal capabilities, seamlessly handling text, images, and video.

Leading GenAI models comparison (2025-2026)

| Model | Strengths | Context Window | Key Use Cases |

|---|---|---|---|

| GPT-5 / GPT-4.5 | General reasoning, creative writing | 128K tokens | Content creation, brainstorming |

| Claude 4 Opus | Code generation, long documents | 200K tokens | Software development, analysis |

| Gemini 3 Pro | Multimodal understanding | 1M+ tokens | Video analysis, complex research |

| o3 (OpenAI) | Advanced reasoning | Variable | Mathematical proofs, logic problems |

Video generation arrives

One of the most significant developments I’ve witnessed is the arrival of text-to-video capabilities. OpenAI’s Sora, launched in 2025, can generate realistic videos from text prompts. Google’s partnerships with video platforms and tools like Runway Gen-3 have made professional-quality video generation accessible. This represents a fundamental shift in content creation capabilities.

Multimodal and agentic AI

The latest models don’t just process text anymore. Gartner predicts that by 2030, 80% of enterprise software will be multimodal (up from less than 5% in 2024), handling text, images, video, audio, and code simultaneously.

More significantly, agentic AI, systems that act autonomously to complete complex tasks, has emerged. By 2028, 33% of enterprise applications will incorporate agentic AI capabilities, up from less than 1% in 2024. We’re already seeing organisations deploy agents that handle customer support, code reviews, and data analysis with minimal human oversight.

The ethical considerations of GenAI

As Generative AI advances, we must address evolving ethical and legal concerns that have become increasingly urgent.

The AI control and safety challenge

The AI control problem remains critical: ensuring artificial intelligence aligns with humanity’s values and works for us, not against us. Human biases embedded in algorithms concern us now, but as systems become more autonomous and capable, these challenges intensify.

Recent analysis from 2025 shows AI-related incidents rising sharply. Videos are being used at unprecedented rates in political misinformation campaigns. Chatbots have been linked to serious harms. Major language models have exhibited concerning behaviours in testing scenarios.

The transparency problem

The “black box” problem persists: understanding how generative models arrive at their outputs remains difficult. Without transparency, we can’t verify whether information is accurate and free from bias or discrimination. This becomes especially problematic as enterprises deploy AI for critical decisions.

Harmful content and deepfakes

The potential for GenAI to generate harmful or misleading content has materialised in concerning ways. Deepfake technology showing political figures making statements they never made threatens democratic processes. The rise of AI-generated virtual influencers brings these capabilities into the mainstream, with immense implications for shaping public opinion through synthetic content.

Advanced image and video generators capable of creating realistic, explicit content have forced platforms and regulators to grapple with non-consensual deepfakes and content-filtering mandates.

Intellectual property challenges

GenAI poses ongoing intellectual property and copyright issues. The legal implications of content generated using existing creative works remain unclear, particularly regarding copyright infringement, unlicensed content in training data, and ownership. Lawmakers continue establishing protocols for applying existing intellectual property laws to these new capabilities.

Regulatory response

Progress is happening. The European Union’s AI Act moved from negotiation to implementation in late 2025, establishing a tiered risk framework from “unacceptable risk” bans (certain biometric surveillance) to strict obligations for high-risk systems. Similar frameworks are emerging globally through the OECD, UN, and regional bodies.

The future of GenAI

The future of Generative AI and our society appears increasingly intertwined with each new release. From what I’ve observed covering AI developments, several trends are shaping what comes next.

From experimentation to value delivery

The shift across enterprises is dramatic: GenAI is moving from “interesting experiment” to “show me the ROI.” Research indicates 74% of enterprises using GenAI are seeing returns on investment, with pressure mounting to demonstrate measurable business value.

Specialisation and domain expertise

While general-purpose models perform well across broad applications, we’re seeing increasing demand for GenAI specialised by sector. Vertical AI solutions, targeting specific industries like healthcare, finance, or legal, captured $3.5 billion in 2025, nearly 3x the $1.2 billion invested in 2024. These specialised systems understand industry context and regulatory requirements in ways general models cannot.

Sustainable AI development

As models grow more complex, their energy footprint becomes a serious concern. Researchers are developing more efficient algorithms, specialised hardware, and carbon-neutral data centres. In September 2025, University of Florida researchers announced a photonic-computing chip that performs key AI computations using light instead of electricity, promising drastically lower energy consumption.

The human element remains critical

Despite AI’s advancing capabilities, the technology that resonates most combines computational power with human creativity and judgment. Research will certainly develop more accurate and diverse models, opening exciting possibilities in music, design, art, and film. However, success will require balancing innovation with regulation, ensuring GenAI is used ethically and responsibly.

The most successful AI implementations aren’t those that replace humans, but those that amplify human capabilities, giving professionals more time for complex creative work while AI handles repetitive tasks.