Two full cycles of AI hype have collapsed under their own weight before this one. The first AI winter came in the 1970s, when early systems that looked impressive in controlled conditions couldn’t survive contact with real-world complexity. The second followed in the late 1980s, when expert systems, rule-based programmes designed to replicate human decision-making, proved too brittle, too expensive to maintain, and too dependent on assumptions that didn’t hold. In both cases, the failure pattern was the same: a mismatch between what the technology could actually do and what organisations had convinced themselves it could do.

That pattern matters in 2026, because we are in the middle of another cycle. The tools are genuinely more capable this time. The tendency to misplace ourselves in the timeline, to treat whatever layer we’re currently working at as the destination, is exactly the same.

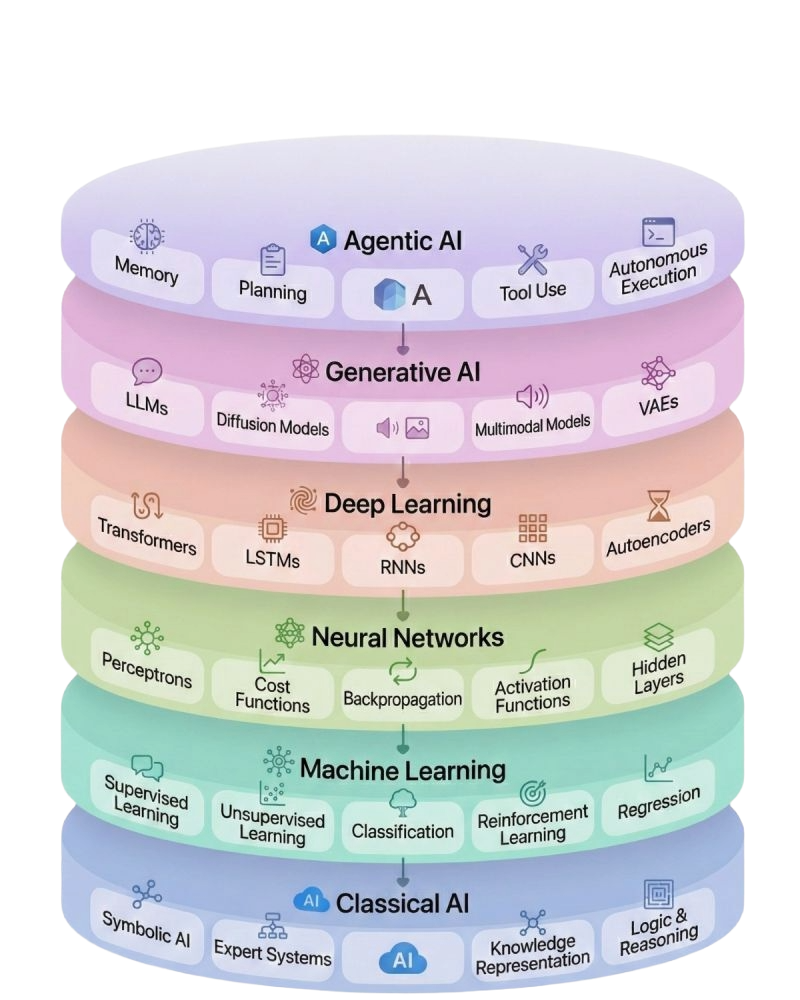

The layers are a timeline

The AI stack that exists today accumulated gradually, each layer making possible what came next. Understanding that sequence is more useful than any tool evaluation, because it tells you where you actually are.

Classical AI, symbolic logic, rule-based systems, expert systems designed to imitate human specialists, gave machines the ability to follow instructions in narrow, predictable contexts. As soon as the context became complex or unpredictable, those systems reached their limits. That brittleness, combined with expensive compute and scarce data, is what drove the first AI winter.

The turning point came in the 1990s and 2000s. Data volumes exploded. Computing power scaled massively. The logic shifted from programming explicit rules to training models to detect patterns in data. This was machine learning, and it made AI genuinely useful for predicting, classifying, and recommending, even if it remained the preserve of specialist technical teams.

Neural networks extended that idea further: layered architectures, weighted connections, the ability to identify patterns too complex for simpler models. Deep learning pushed it further still — very large datasets, deep neural networks, cloud infrastructure at scale. These systems could generate text, images, code, voice, and video. The workbook is precise on what that means: they produce outputs based on probabilities learned from existing data. Understanding that distinction matters when you’re deciding what to trust.

The real breakthrough for organisations, though, was accessibility. Generative AI moved out of laboratories and into everyday tools. For the first time, working with AI didn’t require a technical team. The barrier dropped to a prompt. That shift is what drove the adoption curve of the last three years, and it’s why the generative layer is where most organisations are working today.

The diagram above makes the structural logic of this visible. Each layer sits on the one beneath it. Each one assumed the previous layer’s foundations were solid before it could build on them.

Where most organisations actually are

Generative AI is genuinely useful. The ability to synthesise information, draft outputs, accelerate research, and augment thinking at scale is a real capability shift. Organisations working with it thoughtfully are getting real value. The mistake is treating it as the final layer.

Agentic AI, systems that plan, reason, and execute tasks autonomously across multiple steps, coordinating across tools, data sources, and workflows to reach a defined outcome, is arriving faster than most leadership teams have registered. Gartner projects that 40% of enterprise applications will include integrated task-specific agents by the end of 2026, up from less than 5% in 2025.By 2028, 33% of enterprise software applications will include agentic AI, enabling 15% of day-to-day work decisions to be made autonomously.Is your organisation’s foundations moving at the same pace?

What the AI winters should tell us about skipping layers

The organisations that struggled most in the first two AI winters shared a common trait: they had overcommitted to a layer before understanding what that layer actually required to work. Expert systems needed clean, well-structured knowledge that most organisations didn’t have. The systems were built anyway, the results were poor, confidence collapsed, and investment dried up.

The same dynamic is visible in current agentic AI data. Gartner predicts that over 40% of agentic AI projects will be cancelled by the end of 2027, due to escalating costs, unclear business value, or inadequate risk controls. That’s a significant failure rate for a technology that’s barely reached mainstream deployment. The reasons are familiar: organisations skipping the groundwork.

As our AI in practice workbook puts it, AI progresses in cycles, and failures often come from unrealistic expectations. Agentic systems require consistent, accessible data, defined and well-maintained workflows, clear process ownership, and governance frameworks capable of managing systems that act without human instruction at every step. Arriving at the agentic layer without those foundations reproduces the exact conditions that caused the winters in the first place.

The layers, in this sense, are a readiness map. Each one has prerequisites. Each one assumes the layer beneath it is solid.

The practical companion to the bigger picture

Understanding where you sit in the layers is the strategic question. The operational question, what data do you have, how mature are your processes, what governance is actually in place, is what turns that understanding into a plan.

The AI in Practice workbook was built to answer the operational question. It covers the data sorting exercise we use at the start of every engagement, the process readiness assessment, and the governance questions that become non-negotiable once you’re working with systems that execute autonomously. It’s designed for managers and leadership teams who want to move into AI with a clear picture of what they’re actually working with.

The next layer is closer than you think

93% of IT leaders report intentions to introduce autonomous agents within the next two years, and nearly half have already implemented them. The organisations moving purposefully, assessing their data, reviewing their processes, building governance before they need it, will find the transition manageable. The ones waiting until agents are standard will find themselves rebuilding foundations under pressure.

Which layer are you actually ready to build on, and what does the path to the next one look like?If you want to work through that assessment, our AI strategists can help you map your current maturity against the layers and identify where the real gaps are.